Is AI Singularity a Possibility?

A brief debate on the possibility of AI singularity.

What is AI singularity?

"AI singularity", otherwise known as "The technological singularity", is a hypothetical point in time when a self-improving, artificial intelligence (AI) machine can understand and manipulate concepts within and even beyond the range of the human brain, i.e. when AI tools will develop a human-level thought process that would rapidly evolve into a form of machine consciousness empowered with cognitive capabilities that surpass natural intelligence. That way, singularity denotes the ability of an AI mechanism to think and make rational decisions independently of or even better than humans. It anticipates a conjectural future when technological growth is out of control and irreversible. It predicts the revolutionization of the world by extraordinarily intelligent and very powerful technologies.

Conflicting views on AI singularity

Science fiction portrayals

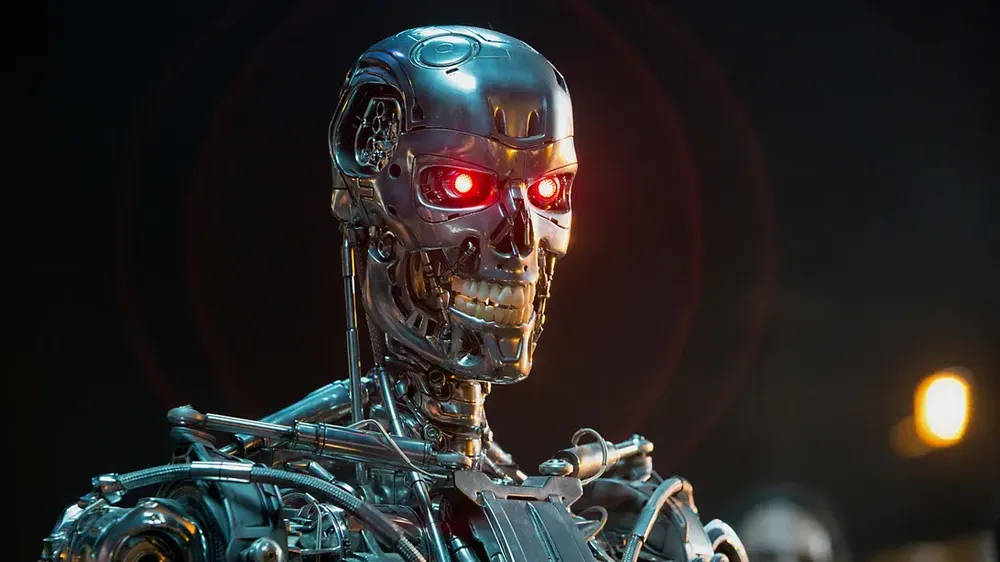

Science fiction (sci-fi) portrays AI agents as thinking computers. This reflects in some sci-fi films about robots and technology. One of them is the 2014 film Transcendence based on a robot who has human consciousness, takes rash decisions, and makes new discoveries.

Another is the 2015 film Chappie in which a robot is programmed to have human consciousness right from childhood. In both scenarios, the robots make bad decisions but are eventually able to salvage and stop themselves from destroying the world. However, in a 2021 film, Outside the Wire, the robot puts the world in serious danger.

Also, sci-fi novels are committed to propagating the doctrine of AI singularity. A classical example is Dan Brown's 2017 mystery thriller novel, Origin. Dan uses the novel to predict that just as dinosaurs and saber tooth tigers became extinct and paved the way for human existence, humans will become extinct and the next species to evolve from the extinction will be technology. Altogether, fictional works have inspired negative speculations about AI. Thus, many people think that AI will outgrow human control and exterminate the human race.

The myth of singularity emanates from the fact that everything is unpredictable. It depicts a tragic situation in which humans would be unable to determine future actions or choices of AI. It foretells that AI machines would become unpredictable and turn against humanity. Machines would treat us in the same manner that we regard ants. And as the outline suggests, we are uncertain when singularity would eventually happen and we would be unprepared. These are frightening claims that inform the question, "Is AI singularity truly a possibility?"

Scientific perspectives

Results of major surveys of AI researchers reveal differing scientific views on the feasibility of AI singularity. Most believe it will happen, and others don't. On the one hand, optimists believe that machine intelligence is dynamic and growing at a rate so unprecedented that it could become more sophisticated than human intelligence. On the other hand, pessimists have a strong conviction that AI machines can neither replicate the human brain nor exhibit any form of intelligence that is superior to natural intelligence. Therefore, they are confident that singularity is an impossible idea. Proponents continue to instill fear, whereas every pessimist upholds the opinion that AI machines are mere systems developed mainly to augment human efficiency, especially in the performance of routine jobs and hazardous tasks.

AI, a blessing or a threat?

Complaints such as AI causing job loss and promoting bias have been widely made. On the contrary, the role of AI in most human activities has been indispensably complementary. Moreover, an AI promoting bias does so based on the data it is trained on. So, why blame AI for displaying the bias of its programmer? The ultimate reality proves AI as a blessing, for it impacts every aspect of life progressively. It facilitates the accomplishment of complex tasks in the easiest way and fastest time possible in every important domain, enhancing productivity tremendously. Modern industries would be incapacitated without automated manufacturing systems. An educational system that lacks electronic resources would render learning a crude experience. Information dissemination and record keeping across fields would be Herculean tasks without computerized mechanisms. These are few, indisputable instances that dispel the thought of AI as a threat to humanity.

Why single out AI from many threats to humanity?

Apart from the obvious that the criticism or demonization of AI does not in any way diminish its relevance to humanity, the controversy surrounding the possibility of AI singularity seems overhyped to divert attention away from certified threats. Some of these threats are linked to factors such as globalization and nuclear engineering. Globalization influences environmental issues like deforestation, climate change, and biodiversity loss. Also, nuclear engineering produces nuclear weapons, which can be serious threats to humans. So, rather than being preoccupied with a myth, I suggest that energy and resources should be invested in the development and deployment of AI machines for mainly positive, advantageous purposes.

Conclusion

Regarding scary sci-fi portrayals of AI as mere subjective claims, I find the scientific invalidation of singularity on the grounds that it is impossible for an AI machine to replicate the human brain convincing. AI machines should not be perceived as super beings in robotic shapes and/or future destroyers of humankind, as anthropomorphizing or deifying AI leads to disillusionment. Thus, I conclude that there is no likelihood of singularity happening, unless supernatural forces endow AI machines with supernatural intelligence.

Author: Udoh, Idang Aniekan

About the author

Email: idanganiekan@gmail.com

Twitter: @Amaphidell

LinkedIn: Aniekan Udoh